The vocabulary of agents

Test-and-Run is a software development approach. I’m going to explain it to you, but that isn’t what this post is about. It’s about whether you knew what Test-and-Run was before I told you. The Test-and-Run approach to coding breaks what you’re…

Test-and-Run is a software development approach. I’m going to explain it to you, but that isn’t what this post is about. It’s about whether you knew what Test-and-Run was before I told you.

The Test-and-Run approach to coding breaks what you’re building it into little parts that you can test one-by-one, so you can catch a problem early before something catastrophic happens when you try to run the whole thing at once.

“But wait,” you might say, “developers test their code all the time!”

And you’d be right. But Test-and-Run goes further: The developer doesn’t just test their code. They write code to test their code. This mindset forces a developer to build a bunch of small things instead of one big thing, and to ask, ‘how might this thing fail, and how do I write code to test for that?’

It also turns out that Test-and-Run is really useful when your coder tends to go wildly off the rails, then apologize profusely, but never really stops making mistakes. Which means it’s also really useful for AI coding. When I build new things with Claude Code, or refactor my codebase, I am ruthless about reminding Claude to adopt a Test-and-Run approach. It’s enshrined in my claude.md in all caps, surrounded by italics.

If you’re a developer reading this, you might have been about to hit that comment button and correct me. Because this isn’t called Test-and-Run. It’s called test-driven development (TDD). I’ve been using the wrong term all along.

So this is what I really want to talk about: the new vocabulary of software development.

Did you already understand the words I used above? Words like TDD, markdown, refactor, claude.md, and codebase? Because those are the syntax of a new programming language.

A very short history of programming languages

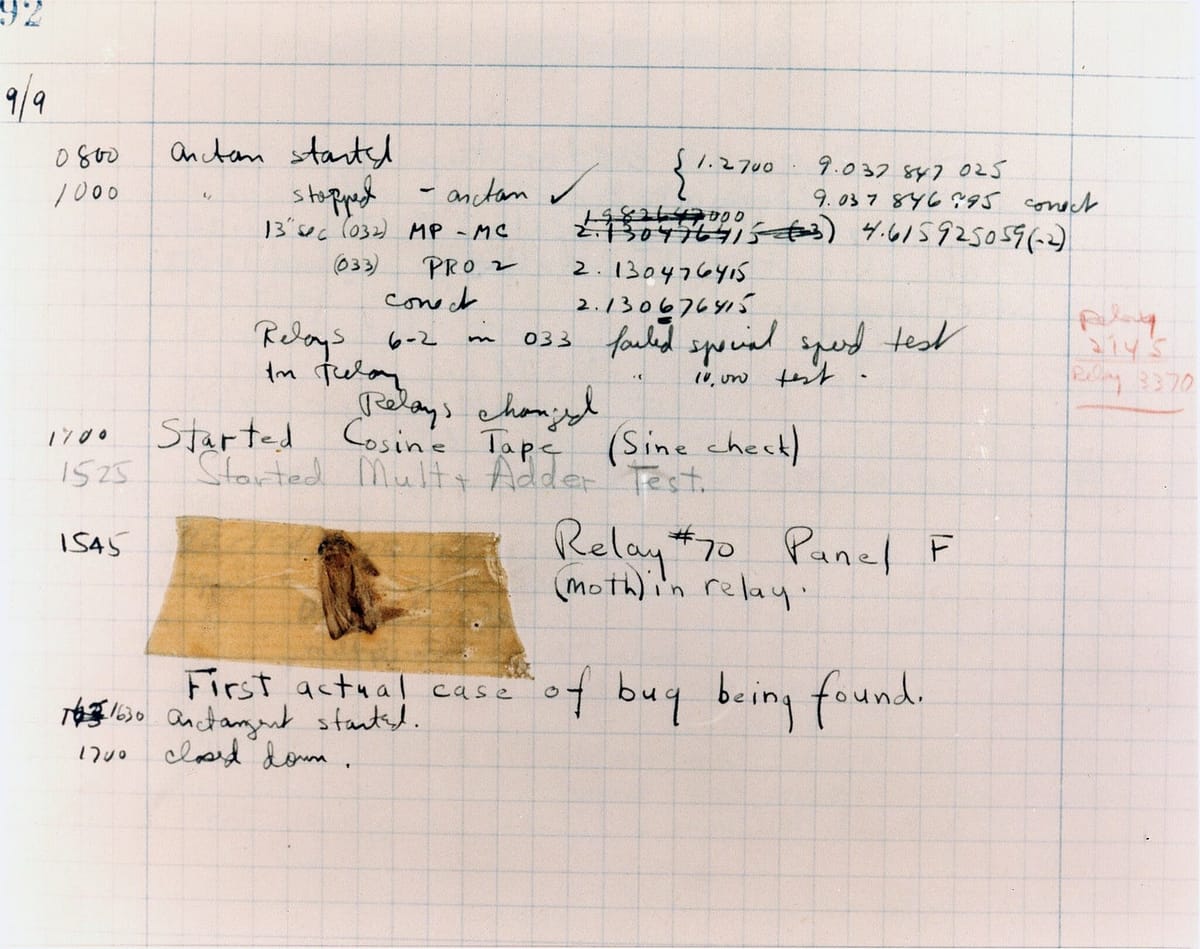

The history of computing is a history of the advancement of the language and the interface. When we started programming computers, we did so by flipping electrical switches. The first computer bug was an actual bug that got stuck in the computer.

But that soon gave way to binary on punched cards, then assembler on teletype machines, then hexadecimal machine language on dumb terminals, then BASIC, FORTRAN, and COBOL on the mainframe and home PC, then the LAMP stack on the Web, then Swift in the App Store.

And now AI has made the language “prose” and the interface “chat.”

Yet just because it’s English doesn’t mean everyone is fluent.

This has some important consequences for what “developer” means in the coming years. I’m still figuring all of this out, so this is more stream-of-consciousness than what I usually write. But here goes.

Fluency is advantage

If you don’t have computer science skills, you won’t have formal training in TDD. You won’t say, “use Test-Driven Development”, and have Claude understand you clearly. You’ll use more words to say the same thing, which burns more tokens. If I know the right name for something and you don’t, I’ll have a small advantage over you. Our Claudes will be the same, but mine will have more skills (literally) than yours, and will understand me better. The connection between me and my AI will be better—faster, with greater clarity—than yours.

“Test-Driven Development” is a command, just like 20 meant ‘Jump to Subroutine’ in Machine Language, or ‘PRINT’ meant display some text in BASIC, or <a href=> meant a hyperlink in HTML.

Many people speak this new agent language fluently. If you run scrums at a tech company, or you’re a product manager, or you have a background in DevSecOps, you’re going to do great, as long as you realize that you’re not going to be writing or deploying the code, you’ll be writing and deploying the things that deploy the code. And you’ll be doing a lot of it in prose, via chat (voice or video.)

And since there will be so many people out there who don’t speak that language fluently, and they’re all going to

- build their own things, and;

- those things will frequently break because AI is unpredictable and humans are not trustworthy then;

- this is your new career.

Skills are programs—and attack surfaces

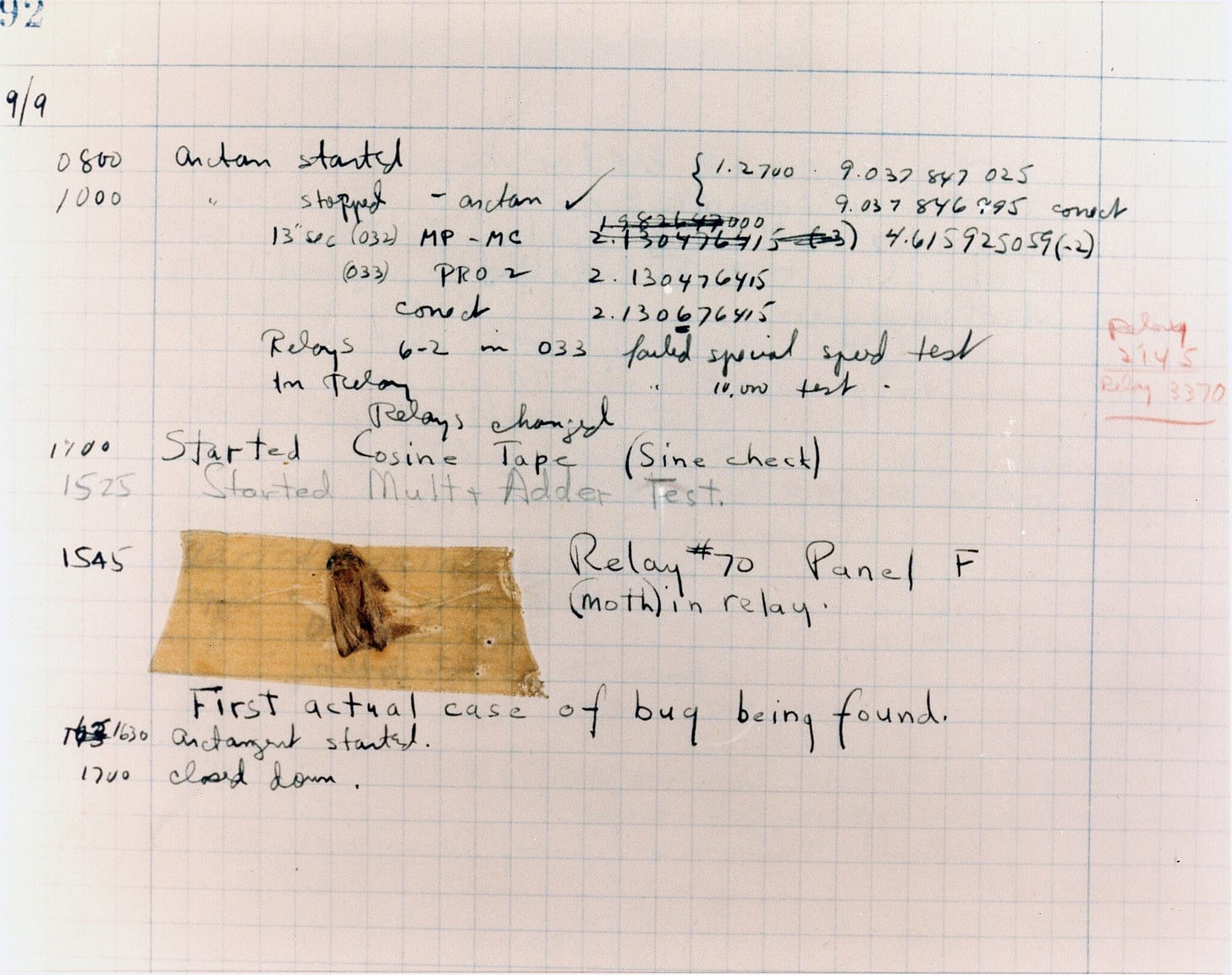

Agentic developers rely on skills—documents written in (somewhat) plain english that an AI reads before it acts. Here’s a skill I wrote to help all the things I make have a consistent look and feel.

There are plenty of these skills floating around on Github already. For a certain early adopter segment, skills are what go viral. One influencer promises he has a skill that will double your coding rate. Another claims his skill will help you negotiate a new salary. A third will teach your agent plan how to plan a plan, or something like that. You can save your own skills locally, or share them on Github with the world. If you do the latter you may even have a memecoin launched about you.

If you can’t tell good skills from bad, you might just install one by accident that secretly makes your AI less productive. If I wanted to be a jerk, I could create and promote a skill that said it would make you better at marketing based on Just Evil Enough, and if you actually used it, it would give your agent bad advice. Maybe you’re my competitor, and I target you to give me an advantage in the market. Maybe you’re a foreign adversary and I want to hurt your economy. People will install these things with a click or the press of the “Y” key, without thinking, and their AI will become worse.

(When I asked Claude about this, after I explicitly told it to give me a response from its perspective without trying to edit or critique or help in any way, it said:

“The point about “skills” (what we’d call system prompts, custom instructions, or CLAUDE.md files) and how bad actors could distribute harmful ones - this is genuinely concerning and I hadn’t thought about it in quite those adversarial terms.”

I assume that this means it couldn’t find something in its training data and had to infer it, whatever that means. I wish my AI were able to respond to me at the level I understand it. Communication is two-way.)

The power of a shared vocabulary

My software development and tech architecture knowledge is self-taught. I say things like “Test-and-Run” rather than “Test Driven Development”, because I didn’t learn it in school. Because professional developers use a known term—one the AI already understands precisely— they can work with many AIs immediately, just as a dentist can discuss Molar 26 or an optometrist can describe a Hordeolum or a lawyer can cite Habeas Corpus. They have a shared vocabulary.

A shared vocabulary doesn’t just reduce ambiguity. It also increases the bandwidth between a human and their AI. Knowing the right words is a form of compression: phrases like “dependency injection” or “race condition” pack a lot of data and context into just two words.

Ambiguity and the end of the syntax error

Claude (with which I often discuss stuff before publishing it) also said I might be overstating the novelty of all this. It pointed out that clear thinking and precise communication have always conferred advantages. I pushed back: what’s new is that they’re words are now directly executable as code, rather than mediated through other humans.

There is a difference between clear thinking and a syntax error.

- Computer code has always objectively compiled: The human must get it right for it to run. One typo and the code won’t run. The developer was nondeterministic; the computer, deterministic. The computer demanded true or false, right or wrong, Binary 0 or Binary 1. “Close” was meaningless.

- Now, the programmer and the computer are both nondeterministic. There’s no right or wrong, just better and worse. Weights. Gradients descended. Probabilities. “Close” is literally the whole game: The AI is interpolating my intent, all the time, with all its weights and biases.

Old software and new prose-based coding are qualitatively, not just incrementally, different.

In other cases of specificity (law, for example) there is an objective shared truth (the book) and nondeterministic humans interpreting it. So there’s room for ambiguity—indeed, some courts fight for months over the placement of a comma, or whether a precedent applies. So working with an AI agent is akin to “passing the bar.”

AI developer certifications

Programming has always been “the language of building things,” but now that language is what we humans say and write to make our AI colleagues and co-founders do our bidding better than our competitors.

Developing on this, I imagine we’ll see similar certification levels for employees as human/machine collaboration becomes an essential business skill.

- How fast can you and your agent communicate accurately (AKA what’s your Shannon’s Law rating?) Are you insurable as an operator of an agent that, if it makes a mistake, can harm the company and its customers?

- What AIs are you trained on?

- At what level can the AI speak to you? What industry syntaxes are you trained on?

Or, more technically, how reliably can you get an AI to do what you actually want.

(Which my friends might rephrase as “how reliably can you shape a given generative model’s probability distribution towards what you want.”)

The new skills

The best developers are moving up the stack, as they always do. They’re building harnesses, using tools like Gastown to manage many agents at once. They’re adding sidebars and control panels to let them move visual elements around. They’re giving the AI the ability to check its work. They’re automating deployment while ensuring secret data doesn’t leak out. Anyone can now develop an App (really: Open up Claude and type “Make me turn based battleship for 2 players as an artifact.” 30 seconds later you’ll have a playable game.)

But if you want software that actually does something, reliably, you’ll need more than a prompt in a chatbot for now. That’s where developers live.

This is a lot

I am not a coder. I was once a product manager. Despite the fact that friends bombard me with questions of “what’s going to happen with AI?” I am barely keeping up.

I’m using Claude Code, and realizing my limitations in doing so, which is what led to this post. The realization is in large part to a chat group I’m in with a few dozen very smart coders. Some of them I have admired for many years not just for their raw skill, but for their thoughtfulness about how technology will affect society. I am barely keeping up with what they’re talking about.

I have my excuses. I have a day job—several of them, in fact. Meanwhile, some of these people of them have literally taken 3-month sabbaticals to just immerse themselves in this because it is the single biggest advance of their ability to Make Things in their lives. They’re excited. We’re not sleeping.

And when they’re honest, they aren’t keeping up either.