Big idea: The Objective Function

Facebook wants attention. Google wants desire. Amazon wants your next purchase. As algorithms drive more of our lives, we need to ask: what are they optimizing for, and is that what we actually want?

As we put together the initial lineup and program for FWD50, we’re working on a central theme for our November event. With the pace of innovation and technology change, it’s hard to choose just one.

We’ve narrowed it down to six big ideas that keep coming up in travels and discussions. So over the next six posts, we’re going to look at each in a bit more detail.

Facebook wants our attention. Google wants to know our desires and searches. Amazon wants our next purchase. Everything has a motive — ulterior or otherwise — and as more of our lives are driven by algorithms, we need to ask ourselves what these goals are.

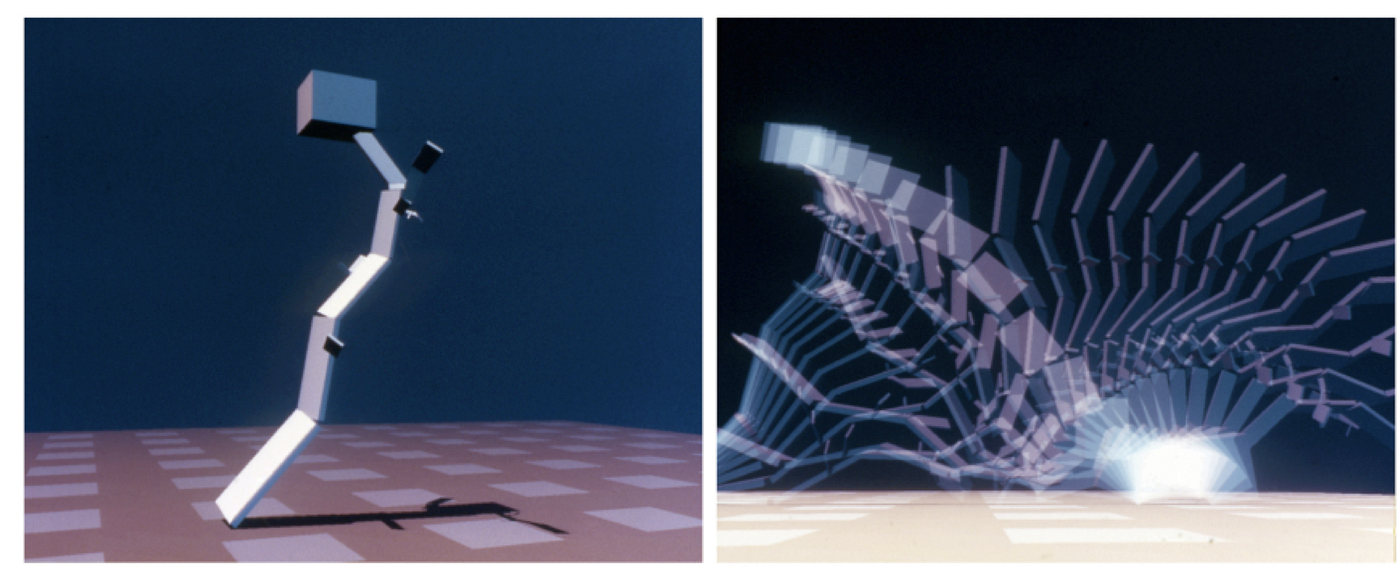

When we train AI to win at a video game, we give it something to maximize (for example, the score in Breakout.) And then the underlying algorithm works furiously to try and find better and better strategies. Google’s Alphago played against itself billions of times, until it was good enough to beat the best human.

Here’s an AI getting better at Breakout than any human, in around 3 hours, with the objective function of the game’s score.

Sometimes, the algorithm produces unexpectedly weird or unintended results. One team of researchers gave competing algorithms a certain amount of virtual “material” and set their goal as “move as fast as possible for ten seconds” hoping the algorithms would evolve vehicles.

Instead, the winning algorithm built a really, really tall virtual tree, heavy on top — and when it fell over, it moved faster than other algorithms

They wanted a car; they got a tree.

Objective functions are good, until they’re not. They’re factories of unintended consequence. And that means we need to evaluate them for bias, unfairness, and real-world suitability. Are they accidentally racist? Do they reinforce existing biases? Do they make the world less fair?

We also need to consider the costs when they go wrong. Is it simply setting the wrong price on an e-commerce store — or denying someone welfare? Does the algorithm just suggest songs you won’t like — or drive your car into a cyclist?

Government policies—and indeed, governments themselves—also have objective functions. Policies and systems we build are an attempt to turn an objective (fairer workplaces; more sustainable energy; better national security; improved education) into a set of actions. What if we get them wrong? What if we can’t properly test them before deploying them in the real world? And ultimately, how do we decide whether the underlying goal of an algorithm, policy, or system is the right one?

(I wrote about objective functions in a bit more detail a couple of months ago, if you want to dig a bit deeper.)